What AI can (& cannot) do today & why you should pay attention

This is your guide to understand what AI can do amazingly well today; so you can make the most of it. Look beyond the hype, and understand the limits. Knowing about AI does not and should not need you to be a technical wizard. Using it should ask even lesser of you.

First, let me make it very clear that I am not an AI researcher, and I do not code (at least not now) I wouldn't be able to hold down a software engineering job to save my life. And this is surely not an attempt to help you navigate the tech. But, do remember that you don't need to be a civil engineer to understand what roads can do to an economy.

Second, I would also like to make it clear that I am not an investor or journalist, I have skin in the game. I am building Magic Studio with a small but incredibly cool team, and over a million people use our products every month. And the soul of our products, is the AI that gives everybody super-powers. So I am confident, I have an insiders view on what this whole AI business is about.

So let's dive in.

So what's all the fuss about?

Why are so many people talking about AI today?

Is this like the dot-com bubble?

Is this like Web3?

Is this a solution looking for a problem that doesn't even exist?

AI research has been on for decades, and plenty of amazing things in the world have been built with AI as a key ingredient. Nuance, for example built a speech recognition system that was based on machine learned probabilities in the 90s. Some of you might also remember the Netflix Prize, that championed the idea that improving algorithms at scale could deliver value.

So then, not new.

But has something changed?

Well yes, of course. And while I won't go into the details, something new has emerged out of all those efforts to build systems that mimic (mostly human) intelligence. This "emergence" is the state where systems that have learnt from a limited context, seem to have abilities that expand outside of that context. An example: an AI model trained on Wikipedia text to complete sentences can now "answer" math questions. The nature and extent of this emergence means that the depth and extent of the abilities in AI are expanding rapidly.

And that has so many of us excited. And some scared.

Many compare the AI buzz to web3 hype over the last few years, a few to the dot-com bubble of the late 90s. But the march of AI actually looks a lot like something else: the microprocessor. The microprocessor has been for a long time improving rapidly; progress along Moore's law is more rapid than most things in our technological history. And it has underpinned a large part of the massive progress we have made in nearly everything over the last few decades. AI looks a lot like this, and now has some track record of showing the same signs of rapid improvement. Rather than (mostly) physics though this time the rate-limiting factor for progress seems to be data (quantity & complexity). But since software in general is more agile than hardware we are probably doubling computational capabilities every 6 months!

This may run out of steam soon, or run-away like nothing we have seen before. I am not interested in predicting what will happen. Suffice to say there are rapid (like never before) improvements in AI, and a lot of things are changing as a result.

So great there's all this power. What can we do with it?

I am going break this down into 3 ways that AI systems are being created, built, imagined and used; mostly centered around the information they process and deliver:

- Text

- Visual Content

- Everything Else

The word machine

So AI has been dealing with text for some time now. We've had our share of laughs with auto-complete. But then something changed with the "large" language models of the recent years. Now trained on massive sets of data, at the scale of the internet itself, AI seems to be able generate larger bodies of text that retain coherence across the the text. The effect is that these texts seem many times indistinguishable from something that would have been created by humans. I will deliberately not call on the Turing test here, because that requires a more nuanced understanding of things. But in effect these text models have become very powerful in generating text that helps convey meaningful information to the readers.

Great! So we can throw out our keyboards, and let machines do all the typing?

Well, not quite, not yet.

So there is thing about machines spewing information, and how we percieve it, that results in the idea of "hallucination"; seemingly confident output of false information. The problem is a little complex. Sometimes it is easy to verify that information is false, for example, the price of a television. Sometimes it's quite hard, for example, which television is the best out of a set of televisions.

The reason this happens is critical to understanding what AI is doing.

AI is generating a coherent, relevant text construction that matches the request and looks in place in the vast amount text it has seen. Truth however is not an explicit ingredient in the text. So the AI doesn't have a barometer for truth, a layer that extends beyond the semantic soundness of the text.

There are also cultural ideas like credibility, authority and trust that influence our interaction with these systems. But that is a heady mix for another discussion, another day.

So what can you do with all this powerful text AI today?

For those that need to create text of various kinds, textual AI is already massively powerful and productive. The ability to instantly expand ideas, and find seed constructions to power human creativity is already here. While the most amazing things that humans are writing, are probably still out of reach for AI assisted attempts, already human creators with AI sidekicks are vastly more effective and productive than those of us toiling away at the keys. It is perhaps unlikely to ever replace human creativity completely, because I fundamentally believe the need to create is human. Stranger things have happened though!

For those that need to process information (yes, all you chatbot aficionados), things are a bit tricky. For limited information contexts like parsing your website or support content and surfacing answers to questions, AI can be remarkably effective. The broader you make this scope, the more likely that AI will produce nonsense that's dangerously passable as the truth. So there is a strong need to have engineered guardrails for verification; for example a mechanism to retreive and quote the exact sources of piece of information.

All in all you should be using AI for as part of you creative writing and information delivery processes.

Start today. Things are great, and will get massively better.

What you see is what you get

Well this is a bit closer to home for me, with all the amazing things we are building at Magic Studio.

The idea that visual information (in the form of pixels) can be processed coherently by AI systems is relatively recent, compared to text. However I daresay it has somehow leapfrogged AI in other areas and also in capturing the popular imagination. Perhaps because our visual experiences are such a big part of who we are, and how we interact with the world.

The things that AI can do with visual information is roughly in one of these categories:

- "Understand" and classify information against other information (other visual content, or text)

- Create visual information from input (either visual or text)

- Create maps of visual information over some space of classification (eg. foreground/background, depth/distance, colour, patterns)

I wish I could make those simpler to understand and remember so, here's another shot:

- Classify

- Generate

- Map

Like text models, visual models also do suffer from random departures from the expected truth, and misinterpretations of input. But outcomes in the visual space are not so binary, this flexibility means the truly useful possibilities are much much wider.

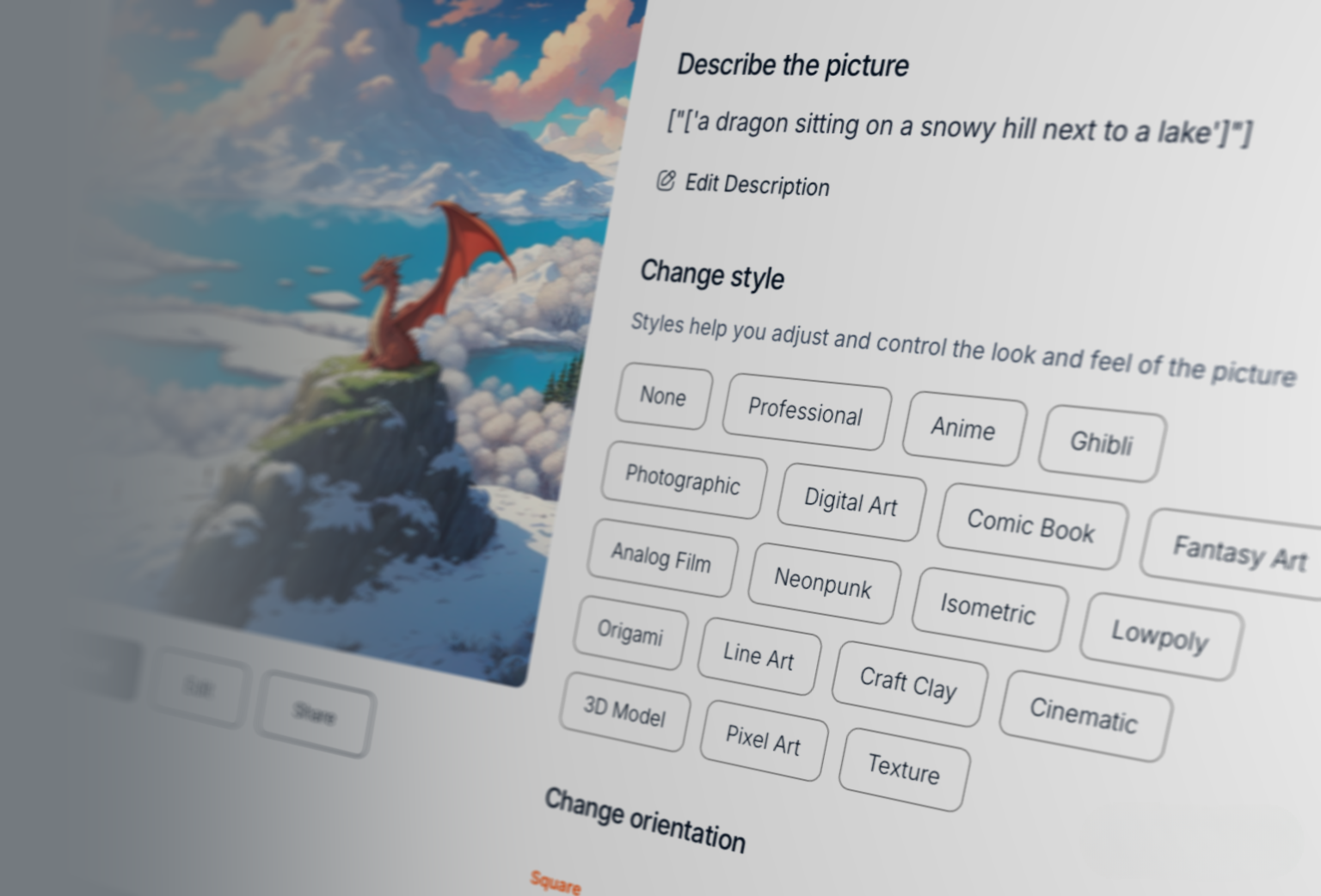

For example: these are all perfectly acceptable creations that fit the input a dragon sitting on a snowy hill next to a lake :

dragon sitting on a snowy hill next to a lake in the style of Ghibli StudiosBut how many ways can you say the same thing? Would any of them feel different?

That is the expansive promise of visual AI.

AI processes visual information in a few different ways.

One is the process of taking sets of pixels and correlating it with text that is "nearby"; maybe a caption, alt-text or titles on the page. This creates a virtual "space" on which you can map images to be near each other when they are similar. Incidentally this "space" also covers the text associate with the images.

A second way is by changing the information in each pixel, often with awareness of the pixels around and all the pixels in the instance, toward a direction in which the pixels get closer to the idea that needs to be represented. This is loosely the process of diffusion. The idea is to start with random noise and end up with something coherent. While it is hard to visualise a process that goes from random noise to something meaningful, think of it as the opposite of just smudging an picture.

So the reverse of this demonstration by Matt Henderson:

you can simulate all sorts of dynamical systems just using photoshop filters.

— Matt Henderson (@matthen2) December 18, 2022

noise and de-noise, repeated again and again, creates bands of spreading colours pic.twitter.com/9zbWDl9kK4

A third way to process visual information is to map other dimensions or parameters over the pixels. Examples of this include color information over a black and white image, depth information (3D) over a 2D image often with a set of images as part of a problem statement. All these cases are easy to understand as training AI with pairs of before and after cases, or input and output cases.

In reality, a lot of these ways intermingle in any given model to give us the powerful things we see in action today. For example an AI model that generates images from text, often uses a classifier to navigate the process toward outputs that are closer to the text input.

There is also one more trick that is used to feed the frenzy here; which is the creation of "synthetic data" that can explode the information available to build your AI. For example, people use game engines, which have been built over decades, to create 3D objects and render them on 2D screens, and use that to train mapping of 2D images to 3D depths.

So again, what can you do with all this today?

Before we answer that, I need to mention video. To most people video is just moving images. But in information terms, video is so much more. There is the axis of time, there are intricacies of motion, of frames. While a lot of what applies to images can be extended to video, the complexity of similar problems increases between 2-3 orders or magnitude. Meaning that, if you want to create of a video of a horse on a beach, it would take about 1000 times the computational wizardry that it would need to create a picture of a horse on a beach.

And then there's audio. There's a version of audio that fits into the "everything else", but there is a version of audio AI, mostly speech and effects for both video input and video output. The telling sign that this is actually part of visual expression is that people look for synthetic video tools that put a "face" to the "voice", and are not satisfied with just narration. Again a problem that gets swept in to the complexity of video.

So AI in video will progress correspondingly slowly, but in a field that's moving so rapidly, it's all gravy I guess.

Again, what do you take from all this?

Today you can use AI to reliably edit images, where a lot of nuance is not required. Typically erasing an object from images is super easy and powerful. These erased areas can also be filled in with patterns and textures, including artefacts of context (like shadows & lights), reasonably well. A lot of this needs tuned interactions with the model and layering of multiple models, so a substantial product engineering layer over the AI.

AI also manages to do a great job of breaking images up into it's pieces (for example subject and background), with little or no input. The odd cases where it doesn't work, can be worked around with surprisingly limited input. The most prudent products give users the simple intuitive inputs to guide the AI systems to give dependable results. This process also massively improves the range of creative expression without a barrier of skill or knowledge.

Thirdly, with text input for generating visual results, it is important to understand that our visual cognitive space is much more nuanced and varied. So it is important to treat AI generative systems accordingly.

If you say apple, and I say apple, while we both understand roughly the same thing, the pictures in our head maybe very different. In visual creative expression the creator's intent of what an image must look like is quite important and giving room for iterative movements in that direction is critical. While AI model inputs are typically technical, it is important to distill that to usable, relevant and friendly inputs for the users.

Absolutely amazing products for creative expression are here today. While the AI does need a layer of control engineered into the products, the large canvas for visual expression makes this process both fun and effective. The upside of putting creative visual tools, and liberating this process from the skill & knowledge barriers is already showing up. Images, photos, pictures are further along; but video (and audio) is not far behind.

Everybody should have creative AI visual tools in your workflow today.

A lot of these basic tools are also free, so you can have fun, and switch to more intense versions for your serious work.

And then there's everything else!

Now the everything else might seem a bit pejorative, to those who are working on all the other things AI; but hear me out.

This is the class of AI that is perhaps going to be the most pervasive and valuable. How can I say something so sweeping about a catch-all category?

Because in one crucial way these systems all look similar: they deal with input from systems like these:

- Microphones (housed in speakers mostly)

- LIDAR sensors

- Cameras

- Industrial sensors (vibration for example)

- Personal sensors (body temperature for example)

You get the drift.

Some hardware or physical input, not originating in a human thought.

OK, maybe sometimes a human thought, when you speak to Alexa, Google or Siri, but even so the "everything else" part is taking your speech and converting it to text in a structured context; the other bits are handled by AI that deals with text.

This other end of the everything else AI takes all this input and outputs actions, decisions, information.

So when your car drives itself, AI. When your smart speaker listens to your voice, AI. When a camera at the traffic lights catches an offender, AI. When your TV adapts to the light, and changes the picture processing, AI. When your camera detects the moon and makes it look pretty, AI.

While this may be the most pervasive way in which AI makes the world better, it's not talked about a lot for 3 big reasons:

- It's not sexy

- It doesn't make for great stories

- Each individual problem doesn't seem large

If you are solving a problem today the involves large quantities of data that could help (cameras while driving for example) or complex inputs that need to be translated to actions (voice commands to your smart speaker), you are probably already using AI.

If not, you should be.

What next?

I often think, we tell ourselves that a certain facinating piece of technology is futuristic, because the distance between today and the future makes it all believable and acceptable.

But with AI, it's already here, has been for some time and is only getting better in a hurry.

So make the most of it.

While nothing here is very prescient, or massively revelatory, I would like you to understand that everything about AI is changing rapidly, so don't quote me without the context of time and proportion.

In case you did not know it, AI is Artificial Intelligence. It's a very important ingredient, in whatever mankind cooks up in the next few (or many) years.